Constellation Software, NVIDIA vs Google TPU, Salesforce, Honeywell Aerospace

This email summarises recent primary research on four topics:

- Constellation Software & public customer switching costs and churn

- Salesforce and examples of the types of agentic workflows customers are deploying today

- NVIDIA vs Google TPU training and inference performance per $ per watt

- Honeywell Aerospace commercial vs business jet vs defense OE and aftermarket pricing comparison

Constellation Software & Public Customer Stickiness

We’ve recently published multiple interviews that provide perspective to the switching costs of public customers such as public transit authorities, utilities, and other government authorities using Constellation's software. We estimate ~40% of CSUs revenue is from public sector customers across Volaris, Modaxo, Harris, Jonas Government and Emergency Services, and Vela.

An interesting observation from the interviews is the preference of public authorities for custom software:

The freight rail market and New York City Transit are two that come to mind and have some custom applications that they build themselves. The freight companies do almost everything themselves because they think they are unique and truly need a custom solution. Some of it might, but I don't know that all of it does. They build a lot of stuff themselves. -Former Portfolio Manager, Trapeze

This reminded us of our CEO reference check on Mark Miller; he led Constellation’s push into custom software to increase customer stickiness.

I'll share an example that shifted his perspective and certainly changed mine. I grew up in a software world where you build it once and sell it many times, without customization. If you want something new, we'll enhance our software, but it will be part of the deal because we only want one source code. Mark started having customers in our agriculture business, like ConAgra and another big company with a three-letter name. He advocated for custom builds if the customer wanted them. One business paid our company around a million dollars in services to develop custom software. I wondered why we would want to build custom software, as it seemed like more work for us. He explained that we could charge whatever we wanted for maintenance on that custom software, and it would never be replaced by someone else. We become ingrained with our customer, and it's worth it because it strengthens our position and broadens the moat around us. We can charge whatever we want for maintenance because it's mission-critical. This mindset was a significant change. We're no longer just building once and selling many times. If a one-off can raise our maintenance base, we should do it because that's the strength of our company. - Former Portfolio Manager, Trapeze US

A former Portfolio Manager of Modaxo, a Volaris-owned transportation-focused group, calculated the average cost of Modaxo’s software to its public transit customers is ~0.1% of the customer’s cost base:

"A while back, Mark Miller made us look at the percentage of our annual maintenance relative to the customer's total budget, and we were all below 0.1%. Even customers with maintenance over a million dollars a year—which to us is a tremendous amount—were still below 0.1% of their total operating budget. What seems like a big number on its face is not a large portion of the customer's total spend. The point of that exercise, which every business unit at Volaris went through, was to become less sensitive to large maintenance increases; they're still such a small part of the customer's total annual spend. We sometimes are too sensitive to these big numbers because they seem large to us, but in the grand scheme of things for the customer, it's still such a small portion of their annual budget that maybe it shouldn't matter - Former Portfolio Manager, Trapeze

Modaxo and most other public-sector software solutions are not a desktop application:

If everyone wanted to leave tomorrow, it would take them a decade to get off of the software we use. There is no hockey stick. We are so integrated it is like a lymphatic system. It is not an app or a desktop application or some enterprise application where you can write some Python code and a GUI interface. If I was in the app business, all these offshore companies in India - Former Portfolio Leader at Harris, Constellation Software

The combination of low-cost high benefit, mission criticality, and custom software leads to ~2% attrition per year in the public sector:

For us, in our modeling and forecasting, our churn was 18 years. We planned that customers would change systems every 18 years, which is quite long. We did not have a lot of churn; our companies and almost every company we acquired had sub-2% attrition, so very low churn. The switching costs are very high, particularly in the enterprise, because you are dealing with a transit agency that will have several thousand drivers and another several thousand employees. All of them have to be retrained on a new application. It is a costly undertaking, even on the technical side, to move and convert the data into a new system and rebuild all your integrations. There are a lot of factors that lead to very high switching costs. Again, 18 years is what we used in our planning because we just didn't see a lot of churn. - Former Portfolio Manager, Trapeze US

While many worried about Leonard stepping back, our CEO reference check suggests Miller’s promotion may have took the lead at an opportune time. An executive also makes a similar observation:

"What I would do is lean more into AI, but I would have some mandates, which I think Miller is more heavy-handed about. Mark Leonard was hands-off, like total autonomy. He would never tell you what to do. Even his direct reports - figure it out. Perseus, you are not acquiring, you are not growing enough. Mark never had too many difficult conversations. He would let the free market sort it out. He trusts you. But Mark Miller I think will be more heavy-handed. - Former Portfolio Leader at Harris

Salesforce

This interview with a former Salesforce executive that has over 20 years of experience selling and implementing Salesforce products provides a lens into how public sector customers are adopting AI.

For example, the London Metropolitan police has deployed a customer service agent to answer non-emergency questions and capture details of minor crimes.

For this type of product, a simple record is made of the minor crime. The police don't necessarily need to immediately identify the person making the complaint.

On the one hand, public safety is sensitive, so there are complexities there. You have to be careful how you guide and put guardrails on the agent. On the other hand, this is not necessarily as a client - HMRC or DWP, where they need to know about you before you interact with them. Police don't need that so it makes it much easier in terms of identifying data. They need the repository of the record, the knowledge base. It creates a record. It might know you already as William Barnes and match you, but that is not the point in this use case. That is why it is easier to roll out quickly. - Former Regional Vice President, Public Sector Digital Transformation, Salesforce

It’s more challenging to directly link the person complaining with the existing CRM database:

There were databases it talked to, but integrating is easier than harmonizing. Harmonizing means identity resolution and matching of data types, which is where the complexity lies. There are integrations at play here. and those were set up for the Victim Journey. This agentic use case simply rode on top of the integrations that were there. - Former Regional Vice President, Public Sector Digital Transformation, Salesforce

This illustrates the central obstacle to scaling agentic workflows in the enterprise: agents need to sit on top of clean, connected data. Spinning up a chatbot that logs queries into a separate database is easy. The harder and more valuable task is integrating an agent into existing Salesforce workflows, where it must reconcile identities, harmonize records, and operate across messy CRM data. That challenge also helps explain Salesforce’s $8 billion acquisition of Informatica.

The work to harmonize the data is where AI would be immensely powerful, and it is nowhere near there yet. The work of creating these matching rules, doing some degree of data cleanup and data alignment, filling in gaps, and merging datasets - AI can help with some of them. There are great tools. Informatica has a very powerful set of tools to help with that. Data Cloud does some of that. But the hard work still needs to happen, maybe not all of it and not all at once. That problem did not go away. You cannot simply roll up with Agentforce, Sierra or any agentic solution and say you are glad you didn't fix all this technical debt or data debt because now you don't need to…If you think you can just glue an agent on top of your garbage that you have today and be glad you never solved your problem, you are severely mistaken and in for an expensive surprise. - Former Regional Vice President, Public Sector Digital Transformation, Salesforce

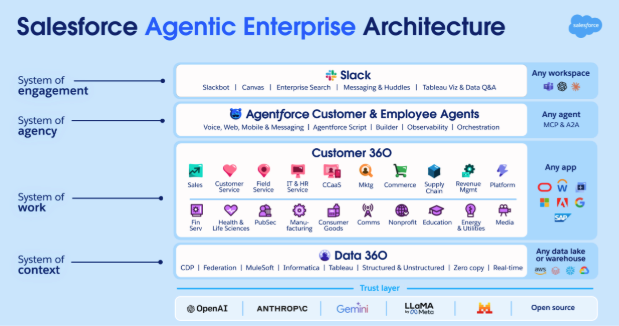

Salesforce is all-in on agentic. We will be covering Agentforce use-cases across industries in more detail over the coming months.

NVIDIA vs Google TPU: Training Performance per $ per Watt

We've published multiple interviews on NVIDIA's GPU vs ASICs:

This interview with a Former NVIDIA Data Center architect explores why Google’s TPU is gaining share:

Google did very well, and Gemini 3 itself is a good model. The hardware they used and the performance, as well as the TCO (Total Cost of Ownership), is definitely lower because it is in-house. Now Meta is very much interested in trying out Google TPU. Nvidia is a chip company, and Google was more of a systems and software company. Google started making chips, and Nvidia started making systems. But the way Google has built the system, They created these racks and connected them through optical, what they call the interconnect, which is proprietary. I will give you the data, but the point is that it has a lot of bandwidth. There are four ICI links, like 9.6 terabits per second. Nvidia has NVLink, which is also proprietary. But what Google did differently is they went with 9200ish chips, and they scaled out with a large number of computes, connecting them through these fiber channels. The advantage is they now have a bigger cluster that can work with each other. Google has always prioritized reliability.- Former Data Center Product Architect and Senior Director at Nvidia

Google’s effective system design overcomes TPU’s performance compared to Blackwell.

Google built a very good system. The chip is fine; it is a little bit worse than Blackwell, but good enough. However, when they built the system, the system is excellent, especially how they put all the clusters together. That is amazing. Of course, the goal is to lower the TCO. - Former Data Center Product Architect and Senior Director at Nvidia

One interesting observation about Jensen:

I think the price pressure will start now. OpenAI, for instance, could say they are already using AMD for inference and could use TPUs for training, demanding a discount. Jensen hates lowering prices. When I suggested lowering the price for Grace by $20, he was quite upset. He might resort to leasing GPUs or other strategies to reduce costs, but ultimately, he will have to lower prices. Currently, renting GPUs in the cloud is very expensive. - Former Data Center Product Architect and Senior Director at Nvidia

The interview goes on to explore how NVIDIA’s market share will evolve, inference vs training competition, and Google and Meta’s positioning.

Honeywell

We published multiple interviews on Honeywell’s Aerospace spin:

One area of focus in our research is the differences in commercial, business, and defense OE and aftermarket pricing. For most Honeywell systems, commercial OE margins are single digit percentages or negative for platforms like the A350:

Honeywell loses about $500,000 for each ship set of equipment delivered to Airbus for the A350. Honeywell doesn't expect to profit from the A350 program until most production deliveries are complete, and then they can focus on spares, overhauls, and repairs. - Former Senior Director, Honeywell

On the other hand, the relative lack of competition in the business jet market leads to higher OE and aftermarket margins for Honeywell’s APU and engines. In avionics, Honeywell’s OE margins can be as high as engine aftermarket gross margins:

In business aviation, on the aftermarket, Honeywell makes a 65 to 70% margin on the ~$1.1 billion APU engine. In airlines, it is usually around 35%, almost half. - Former VP & GM, Honeywell Aerospace

Defence aftermarket pricing is very different to both commercial and business jets. OEMs attempt to argue “commerciality” to earn similar margins as the Commercial business:

The leverage in the aftermarket business for companies like Honeywell is commerciality. Honeywell might argue that the APU, a million-dollar small turbine engine for the F22, is similar to those for commercial airplanes, justifying a commercial price. Even if certified cost and pricing data don't show that profitability level, we argue commercially it's fair, and we win many of those arguments. - Former Senior Director, Honeywell

We also discuss how Jim Currier, the CEO of the Aero spin, approaches aftermarket pricing:

He [Jim Currier] kept asking why not increase it to 12%, 15%, or even 20% - Former VP & GM, Honeywell Aerospace

Related Content

© 2026 In Practise. All rights reserved. This material is for informational purposes only and should not be considered as investment advice.